Can content encryption and DRM be split between server and client to allow multiple vendors to inter-operate, bringing all the benefits of an open market? I believe it can, and should.  Join me in a trip down memory lane with a serious goal – Open Systems for DRM.

The 80’s: bad for clothes, good for technology (music debatable)

Cast your mind back (if you’re old enough) to 1982. Apart from wearing some awful clothes, and listening to some quite decent music alongside a lot of trash, I was writing BASIC (in both senses of the word) games and controlling a home-made floor ‘turtle’ on a BBC Micro. One of the great things about ‘the Beeb’ was that its software APIs and hardware ports were well-documented and hence there was a large third-party market of software and hardware add-ons which used them; indeed my first two real jobs were with companies that supplied networking and robotics add-ons.

Cast your mind back (if you’re old enough) to 1982. Apart from wearing some awful clothes, and listening to some quite decent music alongside a lot of trash, I was writing BASIC (in both senses of the word) games and controlling a home-made floor ‘turtle’ on a BBC Micro. One of the great things about ‘the Beeb’ was that its software APIs and hardware ports were well-documented and hence there was a large third-party market of software and hardware add-ons which used them; indeed my first two real jobs were with companies that supplied networking and robotics add-ons.

Little did I know then that this model was unusual in the ‘Big Iron’ world, where if you bought a mainframe or mini-computer from, say, IBM or DEC, you were pretty much forced to buy all your peripherals and software from them as well. It wasn’t until 1984 that the Unix world came together and defined the concept of an “Open System” in which APIs and interfaces were defined, published and maintained so that equipment and software from multiple vendors could inter-operate. In 1985, the first Interop conference brought vendors together to agree to use a common networking standard, TCP/IP, and the Internet became more than just a military research project.

DRM: Stuck in the 80’s?

Now fast-forward to 2013. I’m not sure the clothes have improved much, and the music certainly hasn’t, but now we take the open formats and protocols that power the World-Wide Web, e-commerce and digital TV completely for granted – but not in everything… Consider the world of content encryption and DRM, particularly in our world of video services. It is still mostly the case that DRM systems require you to use a head-end encryptor, key server and client implementation all from the same company, because their methods of signalling between the head-end and client, whether through in-band private information in the stream or client-server protocols, are closed and proprietary. Just like in the old days of computing and networking, this reduces choice and prevents innovation.

It is also widely recognised that open protocols and systems are inherently more secure than closed, proprietary ones. This seems counter-intuitive at first thought because surely the closed nature of the protocol adds ‘work factor’ for an attacker? This might be true for a short while but it is not long before even closed protocols are reverse engineered and their faults become evident and ripe for attack. On the other hand, a system using well-proven open protocols will have had the benefit of decades of the world’s best security researchers trying to break it.

So would it be possible to apply an Open Systems approach to DRM? I believe so, and Packet Ship can demonstrate this right now.

HLS Encryption

Roger Pantos, author of Apple’s HTTP Live Streaming (HLS) specification was either very far-sighted or just wanted to build the simplest possible working encryption system, or most likely both. Either way, the encryption / DRM scheme in HLS is beautiful in its simplicity and far-reaching in its implications.

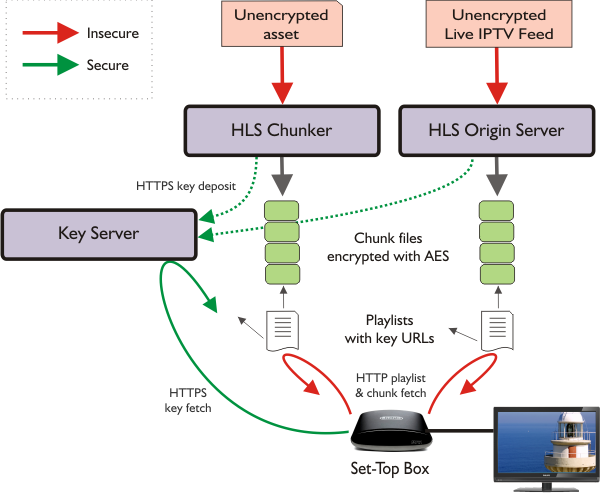

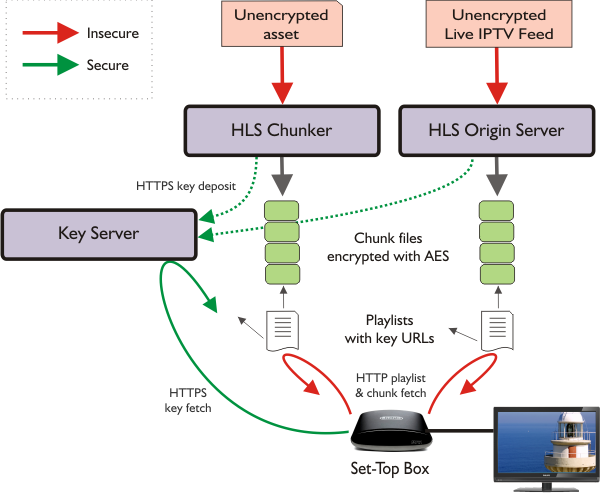

Essentially the HLS encryption system works like this:

- Something at the head-end (either an offline chunker tool for VOD or a Origin server for live TV) encrypts the content.

- The playlist that describes the stream also includes a pointer to a web service where the client can fetch the key for the encryption

- The client fetches the key and decrypts the content

Looking at the security in more detail:

Looking at the security in more detail:

- The content is encrypted with AES-128 CBC, widely regarded as effectively unbreakable

- The web service URL will be HTTPS – the foundation of Web security – so the key cannot be ‘snooped’

- The key can be served by a secure key server which will check the client is allowed to receive it

It is this last part on which the security of the whole system depends. Most simply, the server might rely on an existing authentication of the client (communicated through session cookies) to decide whether to release the key or not. I think this was probably the original intention of the HLS encryption scheme, and Apple’s guidelines still recommend it.

Unfortunately, although this can ensure the client has access to the content, it cannot ensure that the client is trusted not to leak the content once it has obtained it. In particular, an attacker could fairly easily spoof the requests being made by a legitimate client performing a genuine purchase, obtain the key and decrypt the content for onwards distribution through file-sharing networks, pirate DVD/Blurays, or whatever. What is required is for the server to be able to trust that the client is a genuine device it wishes to serve content to, and that the client has not been modified to allow either the key or the content itself to ‘leak’ into the hands of an attacker.

Securing the client – and proving it

The first stage of creating a trusted, secure client is of course actually securing it. There are numerous techniques for this already in use by DRM providers and client developers: code signing, memory randomisation, anti-debugging techniques, and so on. Not every client has them by default, and so they may need to be added by a third-party library or ‘app’. However it is done, the content provider can have reasonable confidence that secured content provided to that class of device will stay that way.

But how does the client prove to the server that it is genuine? Happily HTTPS comes to the rescue again. Most people are (dimly, at least) aware that as well as preventing people snooping your data in the network, HTTPS gives you confidence that the server you think you are connecting to with your web browser actually is who it says it is, and not some Bad Guy trying to steal your passwords. Less commonly known is that the exact same principles (X.509 Public Key Certification) can be used to certify to the server that the client is who they say they are as well. This isn’t widely used in the general Web world (except for some high security intranet and banking systems), but the facility is there in every HTTPS/SSL client and server.

But how does the client prove to the server that it is genuine? Happily HTTPS comes to the rescue again. Most people are (dimly, at least) aware that as well as preventing people snooping your data in the network, HTTPS gives you confidence that the server you think you are connecting to with your web browser actually is who it says it is, and not some Bad Guy trying to steal your passwords. Less commonly known is that the exact same principles (X.509 Public Key Certification) can be used to certify to the server that the client is who they say they are as well. This isn’t widely used in the general Web world (except for some high security intranet and banking systems), but the facility is there in every HTTPS/SSL client and server.

So what is required is that the client applies checks to itself to ensure it isn’t compromised, and uses an X.509 Client Certificate to identify itself to the key server. The key server can verify the certificate is validly issued and for a device type that it trusts, and then issue the key.

The final point of attack that needs to be covered is the RSA Private Key held by the client which allows it to sign its requests. If an attacker could obtain that they could in theory copy the client certificate from a valid client and pretend to be it to obtain the key for the content. In devices such as set-top boxes the private key can be stored in secure hardware; for PCs and mobile devices it requires use of key obfuscation techniques in software which make it nearly (but never absolutely) impossible to extract the private key.

Secure – but still Open

This litany of security work at the client – and there is an analogous but different set of issues at the server side – makes it sound like it would have to be organised and implemented by a single company at both client and server. Not so. Despite all the work internally, the client’s requirements to talk to the server are very simple and use only standard techniques:

- Get the key URL from the HLS playlist

- Fetch the key using HTTPS with Client Certification

- Use the key to decrypt the content (using a standard AES-128 CBC decryptor which the client may well have in hardware)

Hence crucially the client implementation and the server implementation are entirely separate and can be done by different suppliers. The only link in security terms is that the server is configured by the operator to trust that a given device (identified by its certificate) is secure enough to deliver a key to.

So we now have the potential for a fully secure DRM solution with an open market for both server and client implementations – welcome to the world of Open Systems in DRM!

And in practice?

Theory is all very well, but can it work in practice? The answer is most definitely “Yes”.

![]() Packet Ship has already partnered with Oregan Networks to demonstrate and deploy an end-to-end DRM solution based on these principles. Packet Ship’s OverView:DRM server provides the secure key vault, authentication and authorisation checking for key delivery. Our OverView:Origin and OverView:Ingest products do the encryption and HLS chunking for live and on-demand streams, respectively. Oregan’s Onyx Media Browser provides a hardened streaming client and HLS implementation as well as the web browser and client user interface required to build a compelling service. We have already deployed this solution for a major national telco operator.

Packet Ship has already partnered with Oregan Networks to demonstrate and deploy an end-to-end DRM solution based on these principles. Packet Ship’s OverView:DRM server provides the secure key vault, authentication and authorisation checking for key delivery. Our OverView:Origin and OverView:Ingest products do the encryption and HLS chunking for live and on-demand streams, respectively. Oregan’s Onyx Media Browser provides a hardened streaming client and HLS implementation as well as the web browser and client user interface required to build a compelling service. We have already deployed this solution for a major national telco operator.

Packet Ship is also keen to work with other set-top box manufacturers and streaming client developers to expand this open market. Please contact us and we will provide you with test server software and friendly support to help you to interoperate with our solutions.